“DAVE”

On building infrastructure for the questions you can’t yet answer

In my previous essay on strategy-as-protocol, I made the case that AI forces transparency at the organizational level. You can’t delegate what you can’t articulate. When you try to hand strategic work to a machine, you discover that your “method” was partly invisible even to you. The protocol makes it visible. That was the argument.

What I’ve been sitting with since is that the same thing is true one level down. Not just organizational thinking, but your own.

This isn’t a product announcement. There’s nothing to download, no waitlist, no demo. What follows is where that observation led me, and a sketch of what I built when I tried to do something about it.

Chris Argyris called the gap between what people say they do and what they actually do the difference between “theories espoused” and “theories in use.” Strategy-as-protocol addressed that gap for organizations. But individuals have it too. You think you’re weighing evidence carefully. You think you’re holding tensions open. In practice, you’re pattern-matching, comfort-seeking, confirming what you already believe. And there’s no infrastructure for making that visible.

The Gap

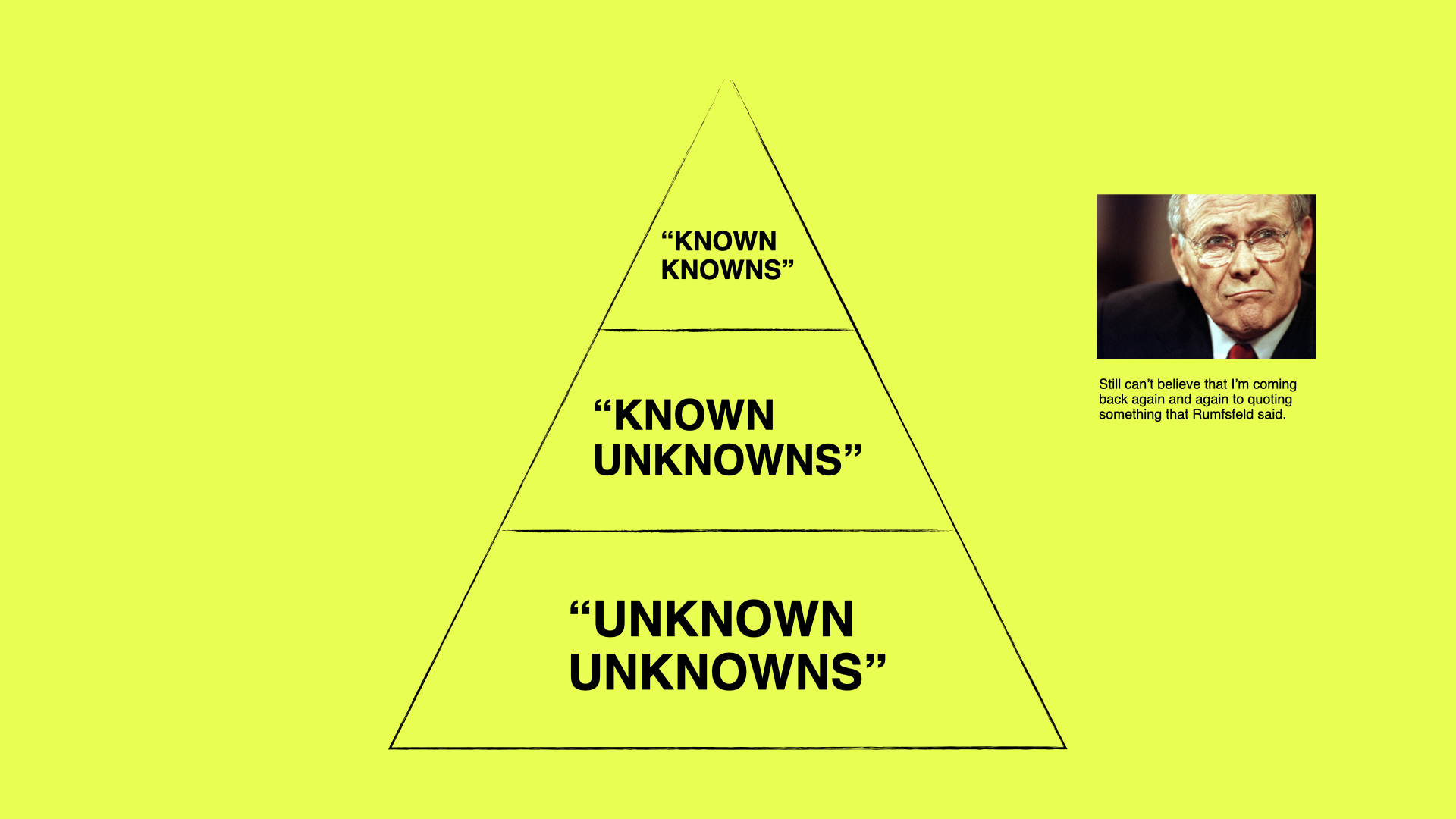

Donald Rumsfeld’s taxonomy gets mocked, but it maps something real. Most AI tools occupy the known-knowns quadrant: clear task, clear method, clear output. Write this email. Summarize this document. Generate this code. The tools are getting better at this every week.

The interesting gap is known unknowns: questions you know you’re carrying but can’t yet resolve. Strategic practitioners live here. You carry open questions across projects for weeks, sometimes months. Evidence accumulates from different contexts. Tensions form between candidate positions. The question itself shifts shape. Sometimes it crystallizes into a position. Sometimes it dissolves. Both are valid outcomes.

There’s almost nothing built for this. Every AI tool I’ve encountered either wants to answer your question or help you execute a task. Neither is what you need when the question itself is still forming.

So I built a deliberation facilitator. I named it “DAVE”. If you’ve seen the movie, you already know why not HAL.

It has one rule: the human is the cognitive agent.

This sounds like a limitation and that’s the point.

The cultural conversation about AI has settled into a familiar groove: AI does things for you, or AI threatens to replace you, or AI augments you. Each of these frames assumes the interesting question is about capability. What can the machine do? What can’t it? How do we divide the labor?

But there’s a different question underneath, one that doesn’t get enough air: what does it mean to think well alongside a machine that can think fast? Not “how do we keep humans in the loop,” which is a governance question. What does it actually look like to design tools that support the human as the one doing the thinking?

Anthropic’s pitch is essentially “keep thinking.” But awareness of the problem and infrastructure for the problem are different things. Saying “the human should stay in charge of reasoning” is a value statement. Building systems that actually hold the space for human reasoning is a design problem.

Designing for the Thinker

What does it mean to build a tool that holds process instead of producing answers?

Start with a distinction that Linus Lee draws between instrumental and engaged interfaces. Instrumental interfaces are ones you’d skip if you could. You’d press the magic button. Engaged interfaces are ones where the process is the value. A tool for deliberation is engaged by definition. If you could skip the thinking and just have the answer, you’d have the wrong answer, because the thinking is how you develop the judgment to know what the answer means.

This has design consequences. An engaged interface can’t optimize for speed or convenience. It has to optimize for the quality of the process. And that means introducing friction deliberately. Research on cognitive scaffolding surfaces the paradox: AI that removes all friction doesn’t support growth. It induces complacency. A tool for thinking needs to be calibrated. Challenging enough to push you. Not so frictionless that you stop developing.

“DAVE” works through a palette of epistemic moves: some widen the space (what’s the strongest version of the position you’re resisting?), some compress it toward resolution (what has to be true for your position to hold?), some reframe the question entirely (is this still a live question, or has it quietly resolved itself?). It shifts its approach based on where a question is in its lifecycle. Those shifts are never announced. They emerge from the state of the deliberation.

None of these moves are new. Good mentors, good editors, good thinking partners have always done this. The question is what becomes possible when you add persistence.

AI memory is having its moment. ChatGPT remembers your preferences. Claude remembers your projects. A whole middleware layer is emerging to sync context across platforms. But notice what all of this remembers: facts, preferences, conclusions. Outcomes. The state of what you know, not the process of how you got there.

Deliberation needs a different kind of persistence. Not what you decided, but what you examined and why. Questions emerge from conversation and get captured. Evidence accumulates across sessions. Tensions are logged as they surface. The whole trajectory persists in an append-only event log. You can pick up a question after two weeks and see not just where you landed but where you forked, what you tested, which assumptions you revised. You can examine your own reasoning patterns. Where did you get stuck? Which moves produced shifts? What recurs?

This is process supervision applied to human reasoning: tracking and supporting the thinking process, not the outcome. A question that dissolved through rigorous examination is more valuable than a position reached through confirmation bias. The system watches for anti-patterns: premature closure, infinite regression, comfort-seeking. It names them when it sees them.

When the deliberation needs material, it dispatches research agents that expand the search space without collapsing the deliberation. Studies on agent-assisted foresight confirm the split: agents dramatically expand what you can survey, but the sensemaking stays with the human. The agents find material. You make meaning.

Not a tool that thinks for you. A structure that holds the space for thinking across the discontinuities of daily work.

The Cultural Question

There’s a version of the AI conversation that treats all this as a technical problem. Build better agents, add better guardrails, define better human-in-the-loop protocols. I think that misses what’s interesting.

The more interesting question is cultural. We’re acquiring powerful instruments for cognition without developing the practices for using them well. It’s not that builders are unaware. The best teams are deeply thoughtful about this. Collaborative Causal Sensemaking research, for instance, argues that AI agents should track the human’s evolving world model and surface discrepancies rather than resolve uncertainty on their behalf. The principle is understood. What’s missing is the space between principle and practice.

We know how to build AI that produces answers. We know how to build AI that executes tasks. We’re only beginning to figure out how to build AI that holds open questions, that maintains productive ambiguity, that supports the kind of slow, accumulative reasoning that strategic work actually requires. This isn’t a gap in technology. It’s a gap in imagination about what these tools can be.

Neal Stephenson’s The Diamond Age features the Young Lady’s Illustrated Primer, a device designed to teach a child “to think for herself.” It adapts to developmental stage. It constructs narrative with the learner as protagonist. It uses a human actor for the voice. “DAVE” borrows the philosophy but applies it at a different lifecycle stage: the Primer is pedagogical, building capability toward known competencies. “DAVE” is deliberative, supporting expertise holders working through what they don’t yet know.

The Second Loop

Argyris distinguished between single-loop and double-loop learning. Single-loop: you fix problems within existing frameworks. You get better at executing the strategy you have. Double-loop: you question the frameworks themselves. You examine whether your way of thinking is actually working.

Most AI tools are single-loop. They help you do what you’re already doing, faster. Memory features are single-loop too: they store your conclusions so you can apply them more consistently next time. Nothing wrong with that. But it means the frameworks themselves never get examined.

“DAVE” is a double-loop tool. The append-only log doesn’t just preserve where you landed. It preserves where you forked, what you tested, which assumptions you revised, where you got stuck. Over time, you start seeing your own reasoning patterns. Not just what you think, but how you think. Where you reliably go wrong. Which moves actually produce shifts. What you avoid.

Strategy-as-protocol made organizational decision logic visible so it could be challenged and improved. The Custodian Shift asked who tends those conditions once execution is delegated. This is the same question, turned inward.

“DAVE” is a working prototype inside my own toolchain, built for one user working through the kinds of questions a consulting practice generates. I don’t know yet whether it works beyond that. I don’t know whether detecting cognitive depletion from text patterns is reliable enough to calibrate on. These are, appropriately, known unknowns.

But the gap is real. And what thinking needs is not answers. It’s structure that respects the thinker.